Anthropic adds dreaming & multiagent tools for Claude

Thu, 7th May 2026 (Yesterday)

Anthropic has introduced dreaming, outcomes, multiagent orchestration and webhooks for Claude Managed Agents, expanding the tools available to developers using its agent platform.

Dreaming is being released as a research preview, while outcomes, multiagent orchestration and memory are available in public beta within Managed Agents.

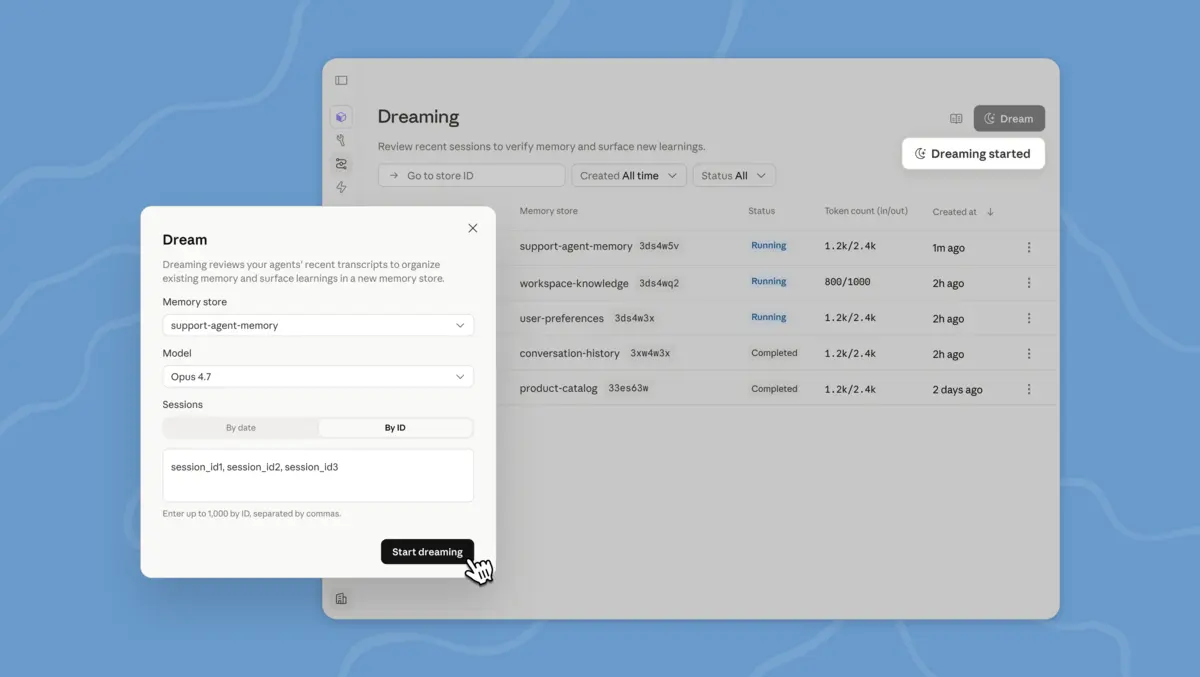

Dreaming is a scheduled process that reviews agent sessions and memory stores, identifies patterns and updates stored memory over time. Developers can choose whether those changes are applied automatically or reviewed first.

The update is part of a broader push to help agents improve through repeated use rather than relying only on prompts within a single session. Anthropic said dreaming can identify recurring mistakes, common workflows and preferences shared across teams, while restructuring memory as it grows.

Memory tools

Anthropic said dreaming works alongside the existing memory system in Managed Agents. Individual agents store lessons as they work, and dreaming reviews those records between sessions to draw out shared learning across agents and keep stored information current.

Anthropic is also adding outcomes, which lets developers define a rubric for success and have an agent work towards that target. A separate grader then assesses the result in a different context window, intended to keep the evaluation separate from the agent's own reasoning.

If the result does not meet the stated standard, the grader identifies what needs to change and the agent produces another version. The approach is aimed at tasks where attention to detail, complete coverage or adherence to specific standards matters.

In internal testing cited by Anthropic, outcomes improved task success by up to 10 points compared with a standard prompting loop. The company also reported gains in file generation quality, with task success rising by 8.4% for docx files and 10.1% for pptx files in internal benchmarks.

Webhooks are being added alongside outcomes so developers can define a target result, let the agent run and receive a notification when the work is finished. That allows agents to complete longer-running tasks without constant monitoring.

Multiple agents

Multiagent orchestration is aimed at jobs Anthropic said are too large or varied for one agent to handle well on its own. Under this model, a lead agent divides a task into parts and passes them to specialist subagents, each with its own model, prompt and tools.

Those agents can work in parallel on a shared filesystem and add their findings to the lead agent's broader context. The lead agent can also return to other agents during the workflow because events remain persistent and each agent retains a record of its work.

Anthropic also highlighted visibility features in the Claude Console, where developers can trace which agent carried out each step, in what order and for what reason. That is intended to help teams understand how work was delegated across several agents.

Customer use

Anthropic cited several customer examples to show how the new tools are being applied. Harvey is using Managed Agents for legal drafting and document creation, and Anthropic said tests showed completion rates rose about sixfold when dreaming helped agents retain lessons such as filetype workarounds and tool-specific patterns between sessions.

At Netflix, the platform team built an analysis agent to process logs from hundreds of builds across different sources. Anthropic said multiagent orchestration allowed the system to analyse batches in parallel and identify recurring issues across large numbers of applications.

Spiral by Every is using multiagent orchestration and outcomes for its writing agent delivered through an API and command-line interface. Anthropic said a lead agent handles incoming requests and follow-up questions, then delegates drafting to subagents, which can generate multiple drafts in parallel and score them against editorial principles and the user's voice.

Wisedocs has built a document quality-check agent using outcomes to review work against internal guidelines. Anthropic said those reviews now run 50% faster while remaining aligned with team standards.

The update gives developers more structured ways to build agents that retain information across sessions, divide work among specialised systems and assess their own output before returning it. Dreaming is available in research preview, while outcomes, multiagent orchestration and memory are in public beta as part of Managed Agents.